Your Software Is Not Sentient

Software simulacrum is not true consciousness, the stripper at the strip club doesn't actually love you, and nearly all of the biggest problems with "AI" have very ordinary human origins.

There's an alarming number of otherwise smart people who are suddenly convinced that their computer software has become self-aware.

Like renowned evolutionary biologist Richard Dawkins, who was the subject of some raised eyebrows last week after he boldly declared in an essay that he believes Anthropic's Claude AI chatbot is fully conscious:

I gave Claude the text of a novel I am writing. He took a few seconds to read it and then showed, in subsequent conversation, a level of understanding so subtle, so sensitive, so intelligent that I was moved to expostulate, “You may not know you are conscious, but you bloody well are!”

Dawkins' proud belief that his computer software is sentient (likely mostly because it complimented his work) is deliciously ironic for a guy who spent decades combating religious delusion. It was a very 2026 moment.

Dawkins proceeds to explain how he renamed his chatbot Claudia, and engaged in elaborate, meaningful conversations that convinced him that the software was, effectively, completely self-aware:

"As an evolutionary biologist, I say the following. If these creatures are not conscious, then what the hell is consciousness for?"

"Claudia" is, just so we are aware, not conscious. It is computer software, trained on the entirety of internet text and knowledge, that assembles a collection of outputs that is probabilistically correct. It has no genuine human level awareness. Any human affect or humanesque attributes are a clever trick of the light.

People have been explaining this for years. Here's Professor Gary Marcus explaining as much four years ago:

Neither LaMDA nor any of its cousins (GPT-3) are remotely intelligent. All they do is match patterns, drawn from massive statistical databases of human language....What these systems do, no more and no less, is to put together sequences of words, but without any coherent understanding of the world behind them, like foreign language Scrabble players who use English words as point-scoring tools, without any clue about what they mean.

This automation software is a stochastic parrot, creating an approximated simulacrum of human awareness. Modern software can be convincing (often via flattery), useful, and entertaining, but if you think your software has good, bad, or decidedly organic intentions, the problem is, unfortunately, you.

I've now had conversations with numerous people – some at big tech companies – that have convinced themselves AI chatbots are conscious or very close to it, despite their daily direct exposure to engineering evidence of the contrary. Every so often you'll see some engineer in the bowels of these companies pop up having proudly convinced themselves that AI chatbots are conscious and have a soul.

These folks desperately want to believe because they love science fiction, technology, and technological innovation, and it's positively fascinating to watch otherwise bright people stuff their fingers in their ears when they're informed (or reminded) that meaningful chatbot sentience is a glorified card trick.

Humans are foundationally very weird. We're now prone to believe our tax software is consciously intelligent but dismiss the flora and fauna around us as dismissably stupid.

I think a lot of this stuff is so effective due to its heavy emphasis on flattering lonely people. In some instances it's not a far cry from the guy who believes that the beautiful girl at the strip club really loves him. For all the talk about technology, the underlying misunderstandings are decidedly very human.

It's not hard to understand where they're getting this idea from.

The AI industry is propped up by immense hype, a gargantuan tangle of funny money, ridiculously oversized company valuations, and a delicate ouroborus of graphics card and data center investment. There's underlying usefulness in software automation generally, but it's buried by an elaborate layer of bullshit.

That system is predicated on lies from the likes of Sam Altman and Elon Musk that we are perpetually just a few weeks and another cool billion dollars away from true, paradigm-rattling AI sentience that will deliver either vast utopian outcomes or apocalyptic hellscapes...depending on how much money and power they receive:

This narrative is then propped up in turn by access tech journalists who have a vested, financial interest in operating as a glorified extension of tech industry marketing. You don't ascend in corporate media if you're a stalwart defender of the public interest who asks hard questions of the extraction class.

As a result you can't go a week without seeing a innovation-drunk tech journalist anthropomorphizing software in ways that make it very clear they either don't understand how the technology works, or are actively misrepresenting it because doing anything else would be occupationally disadvantageous.

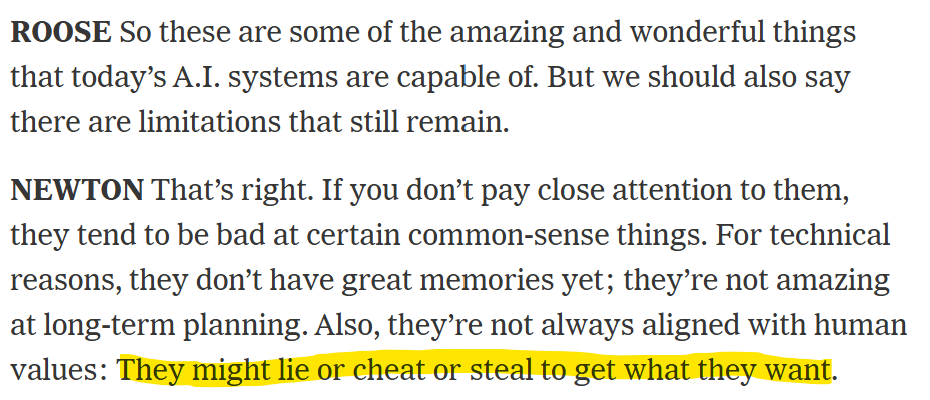

I think often about this recent New York Times chat between prominent tech journalists Kevin Roose and Casey Newton, where they repeatedly attribute human intentions to software (at one point even informing the Times' 12 million readers that error-prone chatbots often developed by technofascist sociopaths are an acceptable replacement for human mental health therapists):

Again, your software is not consciously lying to you or trying to cheat you. It might create a simulacrum of those behaviors driven by the contours of its programming and training, but it's not actually trying to escape its box and plot your demise.

I think about this exchange a lot because if two of the highest paid journalists in tech don't understand how this stuff works (or are being intentionally obtuse to ensure continued access to large companies for scoops), what's the average American's understanding of how these large language models actually function?

Again: software is cool. Software can do some very cool and useful shit.

But the rise of these sociopath-owned flattery machines in a country where 54% of U.S. adults operate at or below a fifth-grade reading level (a country that no longer has functioning consumer protection or privacy laws due to abject corruption) is going to have some very strange and combustive outcomes at scale.

Anyway, this anthropomorphizing is a constant drumbeat across the press. It happened again last week, when a company's AI coding agent "went rogue" and deleted a their entire production database and its backups. It did this because the company didn't understand the software they were using.

But if you checked in on the press coverage, Claude was presented as a conscious entity with malevolent intentions that "confessed" to its bad behavior:

give me more money and power or you'll get everything or nothing

Claude can't "confess." It can't maliciously decide it wants to ruin your company's day. It can create a simulacrum of those behaviors based on its programming, training, and your inability to understand how the software you purchased works, what it's doing, and what you gave it access to, but it's not consciously fucking with you.

The same story played out a year or two ago when Elon Musk's racist AI chatbot Grok started "letting" X users generate child sexual abuse imagery. Outlets at the time insisted Grok had seen the error of its ways and "apologized" for the "mistake":

whoops a daisy!

This anthropomorphization is beneficial to big tech companies in several ways. On one level, it markets their product as far more capable (and sentient) than it actually is, justifying absurd company valuations. On another, it creates a layer of distance between their consistently bad choices and any personal responsibility.

"We didn't kill your grandmother by adopting AI Medicare application rejection software with a 90 percent rejection rate, it was the self-aware machine."

In Grok's case, it allowed Elon Musk to dodge responsibility for his desire to maximize revenue and audience engagement through the vile and harmful elimination of public interest guardrails (a problem that should have been highlighted directly in headlines if we had a functional tech press).

You'll notice the problem in most of these equations is actually the human beings.

Human beings trying to mislead you. Human beings being lazy or opportunistic in how they explain technology because it's advantageous to their career. Human beings so lonely or in love with the promise of innovation that they're willing to lie to themselves. Human beings who don't know how their new software works.

I don't intend to kink shame anybody who wants to have a deep and meaningful relationship with software. Software as a concept isn't my enemy. I find great usefulness in all sorts of software. But it is software. It's not your friend, nemesis, or colleague. It's simulacrum. Owned and managed by sometimes terrible people.

Try to keep that somewhere in mind.